Most organisations don’t struggle with CDP because they lack data. The difficulty sits in how that data moves once the process begins.

What looks like a single questionnaire is, in practice, a cross-functional workflow. Sustainability, legal, risk, finance and senior stakeholders all interact with the same material before it ever reaches the portal. Each group is reading for something slightly different, and each pass introduces its own friction with spreadsheets circulated around departments.

“From the outside CDP processing appears linear. Internally, it’s far from it.”

Where the time actually goes

Across a typical enterprise CDP submission, it’s not unusual for 80 to 150+ hours to be spent preparing, drafting, reviewing and submitting responses.

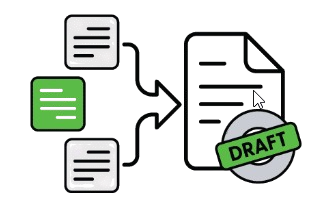

Reconstruction

Finding and reconciling prior responses

Draft assembly

Building a first version from scattered inputs

Version coordination

Managing feedback across stakeholders

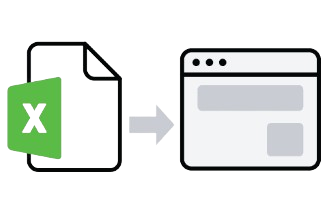

Manual transfer

Moving content between Excel and portal

These steps are necessary to move the process forward. But they don’t directly improve the quality of the submission.

That distinction matters.

A significant portion of CDP effort is tied up in supporting the workflow, not strengthening the outcome. And in most organisations, that pattern repeats every cycle.

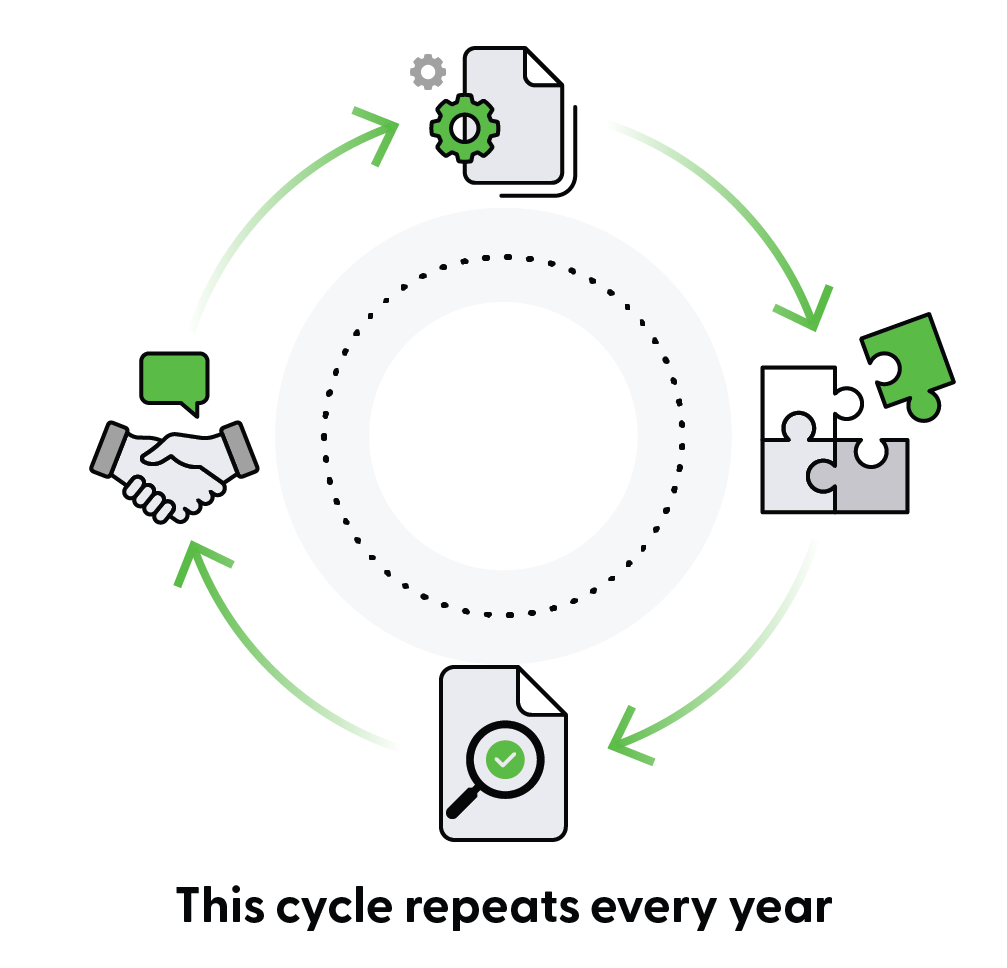

Across most enterprise teams, CDP follows the same four phases:

Each phase serves a different purpose. More importantly, each one consumes time for different reasons.

Once you separate those reasons, it becomes easier to see where effort is being spent supporting the process rather than improving the outcome.

Phase 1: Data preparation

Preparation is less about analysis than it first appears. In most organisations, it’s a consolidation exercise.

Prior responses are pulled together. Policies are checked for updates. Emissions data is validated. Supporting evidence is reviewed to confirm it still reflects current practice.

In reality, much of the time is spent locating information that already exists.

Teams search for last year’s “final” version, only to find several with different timestamps and no clear audit trail. Documents sit across shared drives, inboxes and exports, and each cycle begins with a degree of reconstruction.

There is still judgement here. Deciding whether a policy update changes the framing of a response, or whether new metrics shift the narrative, isn’t something you can automate away.

But that’s not where most of the time goes.

It’s spent assembling and reconciling information that should already be accessible.

CDP Is Coming. Check Your Score Quality Today.

CDP questions and scoring guidance for 2026 are now available. See how EA can review previous submissions, identifies weak answers, and highlights what to improve before the response window opens.

Phase 2: First draft

This is where material becomes structure.

Each question needs to align with current wording, formatting and scoring logic. Prior answers are adapted. New elements are introduced where required.

In manual environments, this phase expands with volume. The longer the questionnaire, the more time it takes, even when the underlying substance hasn’t changed significantly.

This is where repetition becomes most visible.

Similar narratives are assembled multiple times. Existing content is reshaped to fit slightly different prompts. Sections are drafted in isolation, then harmonised later.

Most teams already recognise this, which is why they don’t draft directly in the portal. Instead, they export the questionnaire into Excel so contributors can work in a familiar format.

“The spreadsheet becomes the workflow. Not the solution.”

This is where a large proportion of time is consumed.

Not in deciding what to say, but in assembling content that already exists into a usable first version.

See how teams are generating a usable first draft in a fraction of the time →

Without changing governance or review processes.

Where the pattern becomes clear

At this point, the same dynamic is visible across most teams.

Time is spent reconstructing prior information, reassembling known content into new formats, and managing versions as drafts circulate.

These steps keep the process moving.

But they don’t directly improve the quality of the submission.

That distinction is where the opportunity sits.

Phase 3: Review and approval

This is where governance does its work.

Legal reviews for defensibility. Risk looks for unintended commitments. Finance considers ratings and investor exposure. Sustainability maintains consistency across the narrative.

The value created here is real, and it should remain firmly human-led.

Where time is lost isn’t in the act of review.

It’s in the coordination around it.

Different versions circulate. Comments are reconciled manually. Teams spend time confirming whether they’re working on the latest document.

“The work is necessary. The challenges around it are not.”

Improving this phase isn’t about accelerating decisions. It’s about removing the noise around them, so attention stays on the content rather than on version control.

Phase 4: Submission

By the time content reaches submission, most decisions have already been made.

What remains is translation into the portal. Responses need to align with predefined fields. Character limits shape the narrative. Structured selections need to stay consistent with written answers.

The work is largely administrative, but it concentrates risk.

Errors introduced during transfer can undermine confidence in otherwise well-developed content.

In practice, this phase rarely happens entirely in the portal. Most organisations default back to Excel. The questionnaire is downloaded, circulated, reviewed offline, and only then re-entered.

This isn’t a workaround. It’s a signal the process isn’t designed for how teams actually work.

Why this happens, and what it changes

Across all four phases, the pattern is consistent.

Human Judgement

Mechanical Work

These are treated the same in most workflows….They shouldn’t be.

Some parts of CDP require judgement. Others are largely mechanical.

- Interpreting disclosures and managing risk requires human oversight

- Reconstructing responses, assembling drafts and transferring content does not

In most organisations, these are blended together, and the result is predictable.

Highly skilled teams spend time on tasks that don’t require their expertise, while the process becomes slower and harder to manage.

Once these are separated, the opportunity becomes clear.

- Review remains human-led

- Drafting and submission become significantly faster

- Coordination becomes simpler

In practice, this is where teams are reducing overall CDP processing time by around 40–60%, without reducing governance.

What this looks like in practice

Instead of starting from scattered documents, leading teams are changing their starting point.

Previously approved CDP responses become structured inputs, not static files.

That means:

- a usable first draft exists earlier

- content is already aligned to the questionnaire

- review focuses on interpretation, not assembly

The process doesn’t change.The effort required to support it does.

Check Your CDP Score Quality Before It’s Too Late

See how EA reviews previous submissions against the latest scoring guidance, flags weak answers, and highlights what to improve. Get ahead of schedule and avoid the bottlenecks.