If you run CDP inside a large organisation, you don’t need anyone to explain what the cycle looks like. You’ve done it before, and you already know roughly how long it’s going to take — unless the workflow itself has been restructured, as it has been for teams using EA, an AI agentic workflow platform for CDP and sustainability disclosures.

Although CDP may sit within sustainability, the submission rarely belongs to one function alone:

- Legal will read it for exposure and wording risk.

- Risk and finance consider how it may be interpreted externally.

- Corporate communications look for alignment and consistency.

The same set of answers carries different implications across the organisation, which is why the process never feels purely administrative:

- The questionnaire opens.

- You pull last year’s submission to see what still holds.

- You check whether policies have shifted, whether emissions data is fully signed off, whether any wording now feels too exposed in light of current expectations.

- A working draft begins to take shape.

- It circulates to a small internal group, then gradually across functions.

At some point, often later than ideal, someone notices a character constraint that forces a rethink of phrasing. Or two slightly different versions of the same answer resurface in parallel documents. These moments are familiar. They’re rarely dramatic, but they add friction.

None of this is unusual, it’s how CDP gets handled in most businesses.

What’s less often questioned is how much of that time is essential, and how much of it is the by-product of a workflow that hasn’t materially evolved.

Where the Time Really Goes

When teams review a completed CDP cycle, three areas consistently dominate the timeline: assembling the first draft, coordinating review, and managing submission.

The first draft is rarely quick, even in organisations that have responded for years. Prior answers exist, but they’re dispersed across portal exports, internal repositories and working files. Some sections require modest updates; others need careful reframing because the question has shifted, the metrics have moved or the narrative needs tightening.

“When teams review a completed CDP cycle, three areas consistently dominate the timeline: assembling the first draft, coordinating review, and managing submission.”

In disclosures that run to several hundred questions, and in some cases closer to a thousand, drafting effort scales with volume. In a manual environment, there is no compression mechanism. Assembly expands proportionally.

Review then introduces a different form of complexity.

At this stage, coordination becomes less about sequence and more about perspective:

- Sustainability is focused on whether the narrative holds together and reflects current strategy.

- Legal reads the same wording for defensibility and unintended exposure.

- Risk considers how it might be interpreted if challenged externally.

- Finance is alert to phrasing that could influence ratings or investor perception.

When those perspectives are coordinated through spreadsheets, tracked Word documents and email threads, version control becomes a parallel task. Teams spend time confirming that everyone is working from the latest iteration before they can focus on improving the content itself.

That spreadsheet coordination is not accidental. It’s structural. In most organisations, the questionnaire ultimately becomes an Excel working document regardless of where it started. Contributors are familiar with the format, comments accumulate naturally, and approvals can be tracked informally as the file circulates.

The challenge is not that Excel exists in the workflow. It’s that the process of assembling and reconstructing answers inside it remains largely manual.

Even organisations with ESG platforms in place will typically export questionnaires into Excel so that contributors can work in a familiar environment. The file circulates, comments accumulate, approvals are noted, and only then is content transferred back.

Most tools either assume that everything should happen inside a pre-designed web form, or they leave the coordination entirely manual. EA sits between those two approaches, supporting both.

A project owner can assign questions to legal, risk or corporate communications within the platform, or export the draft into Excel and manage approvals externally before re-uploading. To put it more succinctly: the workflow flexes around the organisation rather than forcing the organisation to flex around the tool.

Submission is often treated as the final administrative step, but it’s where field-by-field copy risk concentrates. Approved responses have to be re-entered into the portal environment, where formatting quirks and rigid character limits can force late-stage constraint discovery.

Even when the thinking is complete, small adjustments made during transfer can introduce inconsistencies that weren’t present in the reviewed draft.

None of these activities are conceptually complex. They are cumulative. Together, they explain why CDP preparation often feels heavier than it appears from the outside.

The Shift Isn’t About Working Faster. It’s About Working Differently.

Enterprises reporting 40–60% reductions in preparation time haven’t achieved that by lowering standards. In many cases, governance becomes clearer rather than lighter.

The shift comes from distinguishing between judgement and reconstruction.

Judgement, framing a response, assessing exposure, aligning with internal strategy and securing sign-off, should remain deliberate and human-led.

Reconstruction, retrieving prior language, reshaping similar narratives, reconciling parallel drafts and adapting content to portal constraints, is necessary in a manual workflow, but it doesn’t inherently add insight.

In most large submissions, teams estimate that the first draft and review phases together account for the majority of total cycle time. When reconstruction inside those phases is reduced, overall timelines contract without changing who holds authority.

Once that distinction is visible, the question becomes practical rather than theoretical. Which parts of CDP genuinely require heavy manual effort, and which parts persist because the workflow hasn’t been redesigned?

A Practical Way to Look at CDP: Four Phases

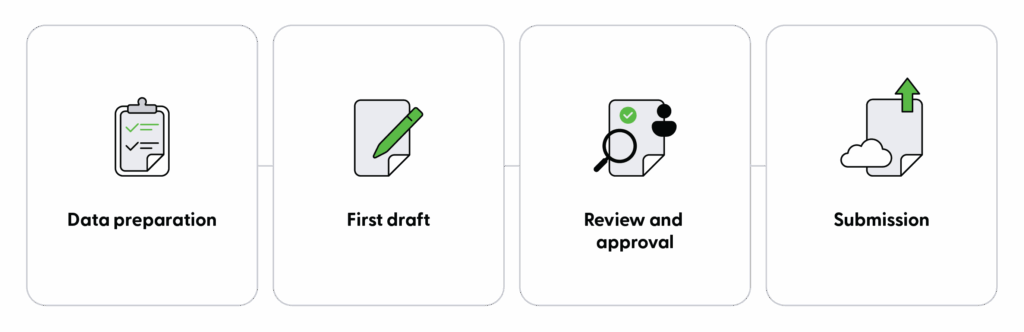

Most enterprise CDP cycles can be broken into four stages:

- Preparation: Retrieve prior responses, validate data, confirm what has changed.

- First Draft: Assemble a coherent response set aligned to the current questionnaire.

- Review: Bring in legal, risk and senior stakeholders to challenge and refine.

- Submission: Transfer finalised content accurately into the portal.

In a fully manual environment, the first draft and review stages absorb the largest share of effort. Preparation varies depending on how well institutional knowledge has been organised. Submission compresses operational risk into a short window.

Teams seeing measurable time reductions haven’t removed any of these stages. They’ve reduced the reconstruction within them.

Reaching a stable, review-ready draft earlier allows stakeholders to focus on substance rather than on incomplete fragments. Managing review in a single structured environment reduces reconciliation work. Preparing submission through structured alignment reduces late constraint discovery.

The governance model doesn’t change. What changes is how much time is spent holding the process together. For a deeper breakdown of these four stages and where time typically leaks, see: The 4 Phases of CDP and Where Enterprise Teams Lose the Most Time.

What This Looks Like in Enterprise Context

In EA enterprise pilots, focusing on workflow design rather than on automation claims has demonstrated that substantial time can be removed from drafting and coordination without weakening oversight.

Teams have reached coherent first drafts materially earlier in the cycle. Review ownership has been clearer. Duplication across versions has reduced. In larger submissions running to hundreds of questions, even incremental compression per section compounds meaningfully.

Submission-stage integration depends on implementation scope. Where integration is feasible, manual transfer risk can be reduced further. Even without it, reducing reconstruction upstream has had a measurable effect on overall preparation timelines.

In several cases, teams have continued to manage stakeholder approvals in Excel, simply using EA to generate the first draft and consolidate final answers into a structured source of truth.

In others, approvals have been handled inside the platform through assignment and multi-level sign-off logic. The efficiency gain does not depend on mandating one model. Instead, it comes from removing the mechanical copying and re-entry that sits between them.

This is not about making CDP effortless. It’s about concentrating effort where it adds value.

See how this worked in practice in the enterprise case study.

Why This Matters Now

CDP questionnaires evolve. Scoring methodologies shift. Internal scrutiny increases. At the same time, sustainability teams are managing adjacent disclosure work and broader strategic responsibilities.

If CDP continues to rely on manual reconstruction and fragile coordination, it will continue to absorb capacity that could be deployed more strategically.

Compressing preparation time isn’t about accelerating disclosure at the expense of rigour. It’s about ensuring that hours are invested in judgement rather than in version management and field-by-field copy risk.

For enterprise teams running CDP annually, that distinction shapes whether delivery feels controlled or reactive.

Where to Go Next

If you want to understand where time is actually leaking inside your own CDP cycle:

- Read: The 4 Phases of CDP and Where Enterprise Teams Lose the Most Time

- Explore: Enterprise Case Study

- Or see how the EA workflow can shorten your CDP process: Book a Demo