CDP preparation inside a large organisation doesn’t sit neatly with one team, and it doesn’t move in a straight line from draft to submission. It loops through data owners, legal, risk and senior stakeholders before it ever reaches the portal.

For most teams, the cycle also has a predictable side-effect. It temporarily reorganises your calendar, and you feel the drag across weeks rather than days.

From a distance, it looks like a single questionnaire. In practice, it behaves more like a cross-functional project that temporarily reorganises internal attention. That’s why it’s difficult to improve unless you break it down properly.

Although sustainability coordinates the cycle, the review psychology isn’t uniform:

- Legal reads for defensibility under external interpretation.

- Risk is looking for unintended commitments.

- Finance is sensitive to ratings and investor exposure.

- Sustainability is trying to protect narrative coherence across the whole submission.

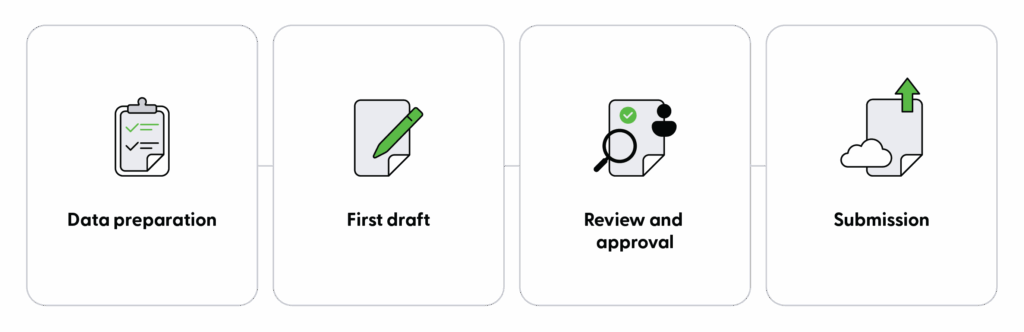

When you look closely, CDP preparation follows a fairly consistent pattern. The same phases repeat each year. What changes is how efficiently those phases are handled, and how much time is absorbed by coordination rather than judgement.

Understanding that structure is the first step toward reclaiming time without weakening oversight.

This is also the lens through which EA structures the workflow, separating mechanical effort from areas that require human judgement.

Phase 1: Data Preparation

What happens:

Preparation is a consolidation exercise. Prior responses are retrieved. Policies are checked for updates. Emissions data is validated against internal systems. Supporting evidence is reviewed to ensure it still reflects current practice. Archived folders are searched for last year’s “final” version, only to find several “final” files with different timestamps and no clear audit trail.

The time absorbed in this phase is rarely about analysis. It’s about locating and reconciling information that already exists. When prior disclosures are stored as static exports rather than structured references, teams spend disproportionate effort reconstructing their own history.

Where judgement matters:

That said, preparation does require informed judgement. Determining whether a policy revision changes the framing of a response, or whether updated metrics alter the narrative, cannot be delegated to process alone.

The opportunity here is not to remove scrutiny. It’s to reduce the time spent rediscovering information that the organisation already holds.

Within EA, prior disclosures and supporting data are surfaced as structured references, reducing the need to reconstruct past submissions manually.

Phase 2: First Draft

What happens:

The first draft converts material into structure. Each question requires a response aligned to current wording, formatting and scoring logic. Prior answers are adapted. New elements are introduced where required.

In manual environments, this phase expands predictably with questionnaire length. Drafting effort scales with volume, even when the underlying substance remains consistent year on year.

Where time is wasted:

Time is lost primarily through repetition. Similar narrative elements are reassembled multiple times. Existing content is copied and reshaped to fit slightly different prompts. Sections are drafted independently before being harmonised later.

In enterprise contexts, teams consistently experience this phase as one of the dominant time sinks because it scales directly with question volume and rework.

Where judgement matters:

Human input is central here. Framing decisions, clarity of explanation and alignment with internal strategy all require judgement. However, the mechanical act of assembling a coherent baseline from prior material does not inherently require prolonged manual effort.

When teams compress this phase, they do so by streamlining assembly, not by reducing oversight. In practice, that assembly rarely happens inside the disclosure portal itself.

EA addresses this by assembling a structured first draft from prior disclosures, policies and supporting materials, allowing teams to start from a coherent baseline rather than rebuilding content manually.

Most enterprises extract the questionnaire into Excel so that contributors can work in a familiar format. The spreadsheet becomes the working draft that moves between functions before anything is submitted.

Phase 3: Review, Edit and Approval

What happens:

Phase 3 is where governance operates formally. Draft responses are scrutinised by legal teams, risk teams, data owners and senior stakeholders. Wording is tested. Exposure is assessed. Consistency across sections is examined.

Review rarely fails because people don’t care. It slows down because different functions are reading for different risks, and inconsistencies get discovered late:

- Legal may push back on phrasing that reads as a commitment under regulatory interpretation.

- Risk may challenge language that creates exposure if challenged externally.

- Finance may react to score-sensitive wording that could influence ratings or investor perception.

- Sustainability is trying to keep responses accurate, coherent, and aligned across the full narrative.

The value generated in this phase is substantive. It strengthens the credibility of the final submission.

Time loss tends to occur not in the review itself, but in the coordination surrounding it. Parallel versions circulate. Comments are reconciled manually. Ownership of revisions may be unclear. Teams invest effort confirming that the document under review is indeed the most recent version.

Reducing friction in this phase improves clarity without diminishing scrutiny. A well-structured review environment allows stakeholders to focus on content rather than version management, meaning it remains human-led by design.

Phase 4: Submission

What happens:

Submission translates approved content into the portal environment. Formatting must align with predefined fields. Character limits constrain narrative length. Selections in structured fields must remain consistent with written responses.

Although intellectually straightforward, this phase concentrates operational risk. Errors introduced during transfer can undermine confidence in otherwise robust content.

The majority of the work in Phase 4 is administrative. Decisions have already been made. What remains is accurate representation within the portal’s constraints.

In practice, that administrative work rarely happens neatly inside a portal. Even when platforms provide structured interfaces, most large organisations default to Excel to coordinate responses. The questionnaire is downloaded, circulated, annotated, approved offline, and only then re-entered. That isn’t a bad habit. It’s how cross-functional review actually works.

The operational difference with EA is not that it replaces that Excel workflow, but rather that it accepts it. Teams can generate a structured first draft back into Excel, circulate it as they always have, and then re-upload an updated version.

This allows EA to fit directly into existing workflows without requiring teams to change how they collaborate across functions.

“The operational difference with EA is not that it replaces that Excel workflow, but rather that it accepts it.”

Alternatively, where stakeholders prefer, answers can be assigned and approved directly in-platform, with a visible audit trail. The point is not to force a new behaviour, but rather to remove unnecessary assembly work within the behaviour that already exists.

Where Reclaiming Time Is Most Feasible

Viewed analytically, the pattern is clear:

- Phase 3 centres on judgement and should remain controlled by experienced stakeholders.

- Phases 2 and 4 contain significant mechanical components that can be streamlined without affecting decision-making authority.

- Phase 1 benefits from structured organisation but retains a layer of necessary evaluation.

EA is designed around this same distinction, focusing automation on assembly and coordination while leaving judgement with internal stakeholders.

Enterprises that have approached CDP through this structured lens have reported meaningful reductions in overall preparation time, particularly through compressing drafting and submission mechanics while maintaining full review processes.

In our enterprise pilots, improvements have been observed in faster baseline draft assembly and clearer review coordination. The emphasis has been on workflow clarity rather than on replacing human involvement.

The conclusion is not that CDP can be simplified. It is that its phases can be treated differently.

A Structural Approach to Efficiency

When each phase is understood for what it contributes, efficiency gains become more targeted. Drafting does not need to begin from scattered documents. Submission does not need to rely on manual transfer. Review does not need to be slowed by version ambiguity.

Governance remains intact throughout. What changes is the amount of effort required to support it.

If you’d like to see how enterprise teams have reduced draft time by around 50% while preserving oversight, the next step is to examine a practical case example to see how enterprise teams reduced draft time by 50%

Where to Go Next

- Read next: How to Compress Your CDP First Draft Without Increasing Risk

- Go deeper: Cut CDP Preparation Time Without Losing Governance Control

- Or see how EA works in practice: Book a Demo